4.1 How to understand Shannon’s information entropy Entropy measures the degree of our lack of information about a system.

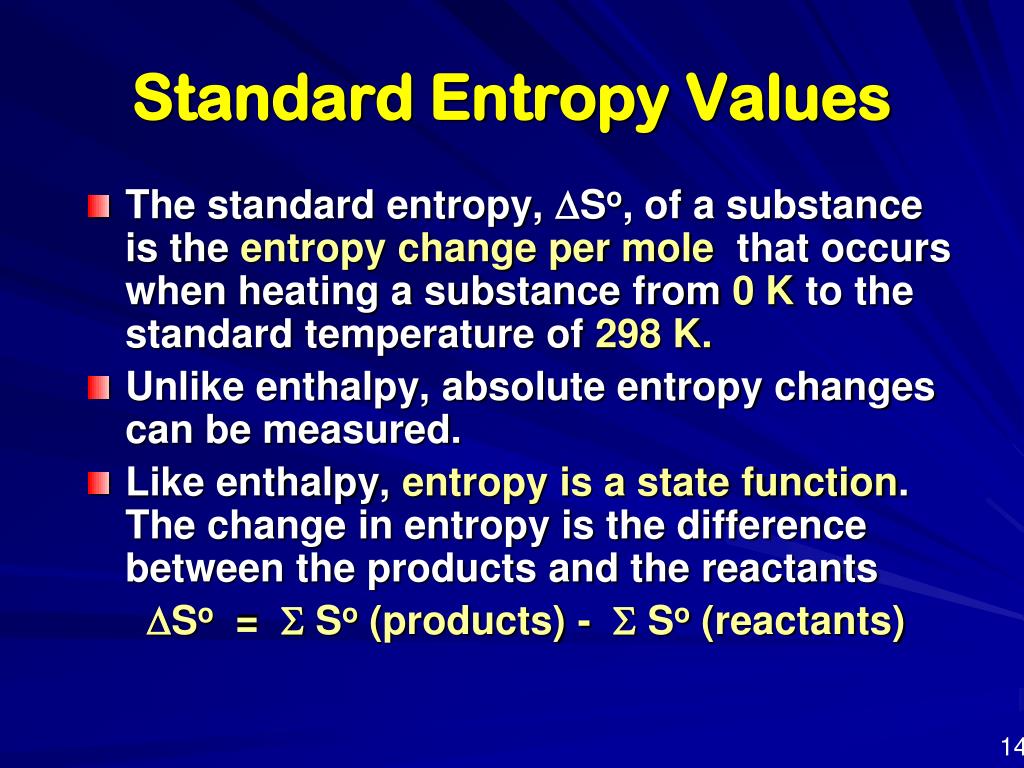

We have changed their notation to avoid confusion. Entropy Definition Entropy is the measure of the disorder of a system. According to the second law of thermodynamics, the entropy of a system can only decrease if the entropy of another system increases. Entropy can have a positive or negative value. Unfortunately, in the information theory, the symbol for entropy is Hand the constant k B is absent. It is denoted by the letter S and has units of joules per kelvin. Entropy itself is traditionally described with the units of J/K. This expression is called Shannon Entropy or Information Entropy. Standard entropies of formation are given in molar quantities because they assume the process is taking place to create 1 mole of the substance. Alternatively, in chemistry, it is also referred to one mole of substance, in which case it is called the molar entropy with a unit of Jmol 1 K 1. But the magnitude of the change is related to the amount of energy the system currently has (which is directly related to its temperature in kelvin). Specific entropy may be expressed relative to a unit of mass, typically the kilogram (unit: Jkg 1 K 1). We associate adding heat with an increase in entropy. entropy, the measure of a system’s thermal energy per unit temperature that is unavailable for doing useful work. If you want to think conceptually, think what adding heat will do to the system. So we look at the amount of heat in joules and compare that to the temperature where we applied the heat. So this allows us to measure $ \Delta S$ directly by looking at how much heat we apply to cause this process to proceed. At 273 K ice and liquid water are in a state of equilibrium, but if we apply heat we can cause ice to melt. So if you take for example ice melting at 273 K, this process is thermodynamically reversible. Entropy doesn't depend on the pathway that we take. Shannon cites Hartley in the opening paragraph of his famous paper A Mathematical Theory of Communication.The best explanation I can give is that in order to measure entropy for a process we can exploit the fact that it's a state function. Hartley published a paper in 1928 defining what we now call Shannon entropy using logarithms base 10. The unit “hartley” is named after Ralph Hartley. So binary logs give bits, natural logs give nats, and decimal logs give dits.īits are sometimes called “shannons” and dits were sometimes called “bans” or “hartleys.” The codebreakers at Bletchley Park during WWII used bans. And when the logs are taken base 10, the result is in units of dits. When logs are taken base e, the result is in units of nats. These days entropy is almost always measured in units of bits, i.e. Entropy - a basic understanding Entropy - its practical use Back to Learn about steam Engineering Units An overview of the units of measurement used in the Steam and Condensate Loop including temperature, pressure, density, volume, heat, work and energy. Tips For Success Absolute temperature is the temperature measured in Kelvins. If temperature changes during the process, then it is usually a good approximation (for small changes in temperature) to take T to be the average temperature in order to avoid trickier math (calculus). Which looks better, except it contains the unfamiliar colog. In SI, entropy is expressed in units of joules per kelvin (J/K). If we write the same definition in terms of cologarithms, we have It has SI units of joules per kelvin (JK 1) or kgm 2 s 2 K 1.

In equations, the symbol for entropy is the letter S. But you’re taking the logs of numbers less than 1, so the logs are negative, and the negative sign outside the sum makes everything positive. Entropy is an extensive property of a thermodynamic system, which means it depends on the amount of matter that is present. The Shannon entropy of a random variable with N possible values, each with probability p i, is defined to beĪt first glace this looks wrong, as if entropy is negative. There’s one place where I would be tempted to use the colog notation, and that’s when speaking of Shannon entropy. I suppose people spoke of cologarithms more often when they did calculations with logarithm tables. The reaction is said to be spontaneous when the. Author summary Transfer Entropy (TE) is an information-theoretic measure commonly used in neuroscience to measure the directed statistical dependence between a source and a target time series, possibly also conditioned on other processes.

The cologarithm base b is the logarithm base 1/ b, or equivalently, the negative of the logarithm base b. The SI unit of Entropy is finally given as Joule/Kelvin, derived from the unit of energy/unit of temperature. Here’s a plot of the frequency of the terms cololgarithm and colog from Google’s Ngram Viewer. The term “cologarithm” was once commonly used but now has faded from memory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed